Machine learning development is the engineering discipline of building systems that learn patterns from data training statistical and deep learning models to make predictions, classify inputs, detect objects, or extract meaning from text without being explicitly programmed for each task.

Development spans the full model lifecycle: data collection and preprocessing with pandas and NumPy, feature engineering, model architecture selection, training with TensorFlow or PyTorch, evaluation via cross-validation and performance metrics, hyperparameter tuning, and deployment via Docker and REST API endpoints with MLflow versioning and post-deployment monitoring.

Below is the full range of ML services we deliver.

We build regression and classification models using scikit-learn, TensorFlow, and PyTorch, trained on your historical business data to forecast demand, predict churn, score leads, detect anomalies, and surface actionable patterns from structured datasets. Our pipeline covers data ingestion with pandas and NumPy, feature engineering, cross-validation, hyperparameter tuning, and MLflow experiment tracking, delivering models that improve continuously as new data accumulates.

We design and train deep neural network architectures for complex pattern recognition tasks implementing feedforward networks, recurrent neural networks (RNN/LSTM) for sequential data, and custom architectures optimized for your dataset characteristics. Training pipelines are built with TensorFlow and PyTorch, tracked via MLflow, containerized with Docker, and deployed to GPU-accelerated cloud infrastructure with full model versioning and performance monitoring.

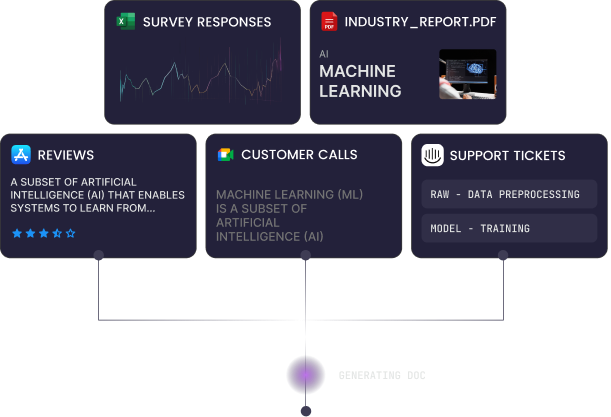

We build NLP systems using transformer models, BERT, and Hugging Face, covering sentiment analysis, named entity recognition (NER), text classification, document summarization, and keyword extraction trained on your domain-specific text data. Unlike ChatGPT API integration which calls a third-party model, our NLP models are fine-tuned on your proprietary corpus giving you models that understand your industry terminology, your customer language, and your specific classification requirements.

We build computer vision pipelines using convolutional neural network (CNN) architectures implementing image classification, object detection (YOLO, Faster R-CNN), image segmentation, and visual anomaly detection models trained on your labeled image datasets. Models are optimized via TensorFlow quantization and pruning to reduce size and inference latency, then deployed to cloud APIs or on-device via TensorFlow Lite and Core ML for real-time inference on iOS and Android without server dependency.

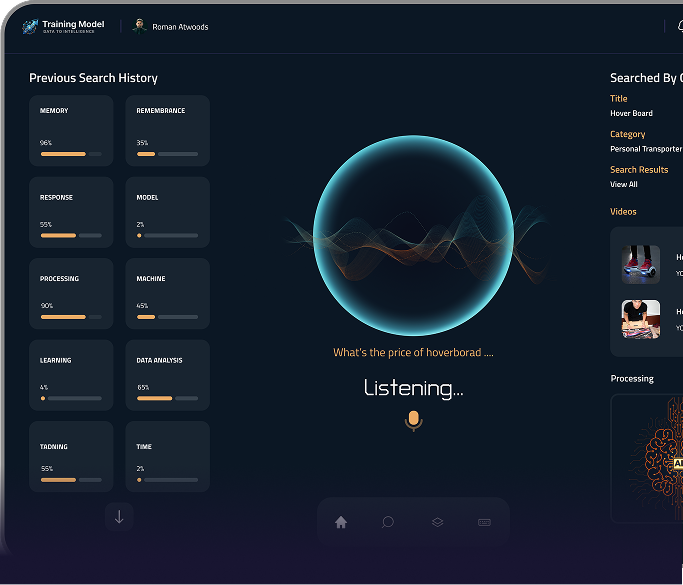

AI chatbot development involves building conversational AI applications that combine machine learning and natural language processing to automate user interactions. These chatbots deliver contextual responses, streamline customer engagement, and integrate with business systems across websites, mobile applications, and messaging platforms.

Skip the hiring process and get a senior TensorFlow and PyTorch engineer embedded in your ML project within days. From dataset audit and model architecture through Docker containerization and cloud or on-device deployment, we handle the full build end to end.

10+ ML engineers available now · TensorFlow, PyTorch & scikit-learn specialists · Computer vision, NLP & predictive analytics models shipped to production

We develop HIPAA-compliant healthcare apps, telemedicine platforms, EHR systems, and digital tools that enhance patient care and clinical workflows.

TensorFlow and PyTorch models trained on your proprietary dataset learn the patterns, terminology, and edge cases specific to your business, outperforming generic AI APIs on your exact use case. A churn prediction model trained on your customer behaviour data will always outperform a generic model trained on someone else's. Your data becomes a compounding technical asset.

Unlike rule-based systems that require manual updates, machine learning models retrain on new data automatically, improving prediction accuracy as your dataset grows. MLflow experiment tracking ensures every retraining cycle is versioned, evaluated against baseline performance, and deployed only when accuracy metrics improve. Your model gets smarter as your business scales.

Models optimized via quantization and pruning and deployed via TensorFlow Lite or Core ML run inference directly on iOS and Android devices, eliminating server round-trip latency, removing cloud inference costs entirely, and enabling ML features that function fully offline. For high-frequency inference tasks like real-time image classification or text analysis, on-device ML reduces operational cost by orders of magnitude versus API-based alternatives.

Classification models, anomaly detection pipelines, and predictive analytics systems built with scikit-learn and TensorFlow automate decisions that would otherwise require manual review fraud detection, document classification, quality control inspection, demand forecasting processing thousands of inputs per second with consistent accuracy. Operational cost drops. Human capacity shifts to higher-value work.

Custom ML models run on your infrastructure, not a third-party API endpoint you have no control over. No vendor lock-in, no usage-based pricing that scales against you, no risk of the API changing or being deprecated. Your model, your data pipeline, your deployment, containerized with Docker and orchestrated via Kubernetes for full portability across cloud providers.

Generic vision and NLP APIs are trained on broad datasets that may not reflect your domain. CNN-based computer vision models trained on your labeled image data product defects, medical scans, and retail inventory achieve significantly higher accuracy than general-purpose vision APIs on domain-specific classification tasks. BERT-based NLP models fine-tuned on your customer communications learn your exact terminology, product names, and classification categories in ways no pre-trained generic model is designed to do.

Every machine learning model we build runs on a Python stack selected for performance track record in production ML environments from data preprocessing and model training through experiment tracking, containerization, and deployment to cloud or on-device inference targets. Every technology choice below is justified by a specific requirement in the ML pipeline, not adopted because it is new.

We conduct stakeholder workshops to define business objectives, identify where ML can deliver measurable impact, and audit available datasets for volume, quality, and labeling requirements. This phase produces a technical ML specification, covering problem framing (classification vs regression vs detection), model architecture candidates, data pipeline requirements, performance success metrics, and a phased delivery roadmap.

We build data ingestion pipelines using Python, pandas, and NumPy, collecting, cleaning, deduplicating, and structuring datasets from your existing systems, databases, or third-party sources. For computer vision projects we manage image labeling pipelines; for NLP projects we handle corpus cleaning and tokenization. Data quality at this stage directly determines model accuracy, we don't proceed to training until dataset integrity is validated.

We select and design model architectures matched to your problem type, CNN architectures for computer vision, transformer models and BERT fine-tuning for NLP, gradient boosting and neural networks via scikit-learn and TensorFlow for predictive analytics. Architecture decisions are documented with rationale, including framework selection (TensorFlow vs PyTorch), layer configuration, and optimization strategy, before any training begins.

We train models on your prepared dataset using TensorFlow or PyTorch, running cross-validation to assess generalization, tracking every experiment with MLflow for full reproducibility, and applying hyperparameter tuning via grid search or Bayesian optimization to improve accuracy, precision, and recall against the benchmarks defined in the discovery phase. Training runs are versioned, no experiment is lost, and every performance improvement is traceable to specific configuration changes

We evaluate trained models against held-out test datasets, measuring accuracy, precision, recall, F1 score, and AUC-ROC depending on problem type, and stress-testing against edge cases and adversarial inputs your production environment is likely to encounter. Models proceed to deployment only when performance metrics meet the benchmarks defined in the discovery phase

We containerize models with Docker, deploy to cloud infrastructure via Kubernetes or AWS SageMaker, and expose inference via FastAPI REST endpoints for integration with your existing systems. For mobile deployment targets we convert and optimize models to TensorFlow Lite or Core ML, applying quantization and pruning to meet on-device latency and size requirements.

Post-deployment we monitor model performance via MLflow tracking and custom dashboards, detecting data drift, prediction accuracy degradation, and distribution shift that indicate retraining is needed. Scheduled retraining pipelines on new data keep your models current as your business and data evolve maintaining long-term accuracy without requiring manual intervention each time data distributions shift.

We conduct stakeholder workshops to define business objectives, identify where ML can deliver measurable impact, and audit available datasets for volume, quality, and labeling requirements. This phase produces a technical ML specification, covering problem framing (classification vs regression vs detection), model architecture candidates, data pipeline requirements, performance success metrics, and a phased delivery roadmap.

We build data ingestion pipelines using Python, pandas, and NumPy, collecting, cleaning, deduplicating, and structuring datasets from your existing systems, databases, or third-party sources. For computer vision projects we manage image labeling pipelines; for NLP projects we handle corpus cleaning and tokenization. Data quality at this stage directly determines model accuracy, we don't proceed to training until dataset integrity is validated.

We select and design model architectures matched to your problem type, CNN architectures for computer vision, transformer models and BERT fine-tuning for NLP, gradient boosting and neural networks via scikit-learn and TensorFlow for predictive analytics. Architecture decisions are documented with rationale, including framework selection (TensorFlow vs PyTorch), layer configuration, and optimization strategy, before any training begins.

We train models on your prepared dataset using TensorFlow or PyTorch, running cross-validation to assess generalization, tracking every experiment with MLflow for full reproducibility, and applying hyperparameter tuning via grid search or Bayesian optimization to improve accuracy, precision, and recall against the benchmarks defined in the discovery phase. Training runs are versioned, no experiment is lost, and every performance improvement is traceable to specific configuration changes

We evaluate trained models against held-out test datasets, measuring accuracy, precision, recall, F1 score, and AUC-ROC depending on problem type, and stress-testing against edge cases and adversarial inputs your production environment is likely to encounter. Models proceed to deployment only when performance metrics meet the benchmarks defined in the discovery phase

We containerize models with Docker, deploy to cloud infrastructure via Kubernetes or AWS SageMaker, and expose inference via FastAPI REST endpoints for integration with your existing systems. For mobile deployment targets we convert and optimize models to TensorFlow Lite or Core ML, applying quantization and pruning to meet on-device latency and size requirements.

Post-deployment we monitor model performance via MLflow tracking and custom dashboards, detecting data drift, prediction accuracy degradation, and distribution shift that indicate retraining is needed. Scheduled retraining pipelines on new data keep your models current as your business and data evolve maintaining long-term accuracy without requiring manual intervention each time data distributions shift.

Every model we train is built exclusively on your proprietary data, not pre-trained on generic datasets and handed to you as a black box. We engineer the full data pipeline from your existing systems, handle preprocessing and feature engineering with pandas and NumPy, and train TensorFlow or PyTorch models that learn the specific patterns in your business data. The result is a model that outperforms any generic AI API on your exact use case.

We own every phase of your ML project, from dataset audit and architecture design through model training, MLflow experiment tracking, Docker containerization, and deployment via Kubernetes or AWS SageMaker. No handoffs to separate data science and DevOps teams. One engineering team owns the problem end-to-end and is accountable for deployed model accuracy under real-world conditions, not just training benchmark results.

We optimize trained models for on-device deployment via TensorFlow Lite and Core ML, applying quantization and pruning to reduce model size and inference latency for iOS and Android targets. This capability eliminates server infrastructure costs for high-frequency inference tasks and enables ML features that run fully offline. Very few ML development teams have both the model training depth and the mobile deployment expertise to deliver this end-to-end.

Our computer vision pipelines use CNN architectures, including YOLO and Faster R-CNN, trained on your labeled image data for classification, object detection, and anomaly detection tasks specific to your industry. Our NLP systems use Hugging Face transformer models and BERT fine-tuned on your text corpus, not generic sentiment APIs. Domain-specific training produces accuracy levels no off-the-shelf API can match on your data.

Every training run is tracked with MLflow, logging hyperparameters, evaluation metrics, and model artifacts for full experiment reproducibility. Every model version is stored with its training data snapshot and configuration, meaning you can audit, compare, and roll back to any previous model state. No black-box results, no lost experiments, no deployments that cannot be traced back to a specific training run and dataset version.

Model accuracy degrades over time as real-world data distributions shift away from training data, a problem most ML vendors ignore after deployment. We implement ongoing monitoring pipelines that detect data drift and accuracy degradation, trigger retraining on new data, and validate performance improvements before promoting updated models to the live environment. Your ML system keeps improving after launch rather than quietly degrading as your data evolves.

Every model we train is built exclusively on your proprietary data, not pre-trained on generic datasets and handed to you as a black box. We engineer the full data pipeline from your existing systems, handle preprocessing and feature engineering with pandas and NumPy, and train TensorFlow or PyTorch models that learn the specific patterns in your business data. The result is a model that outperforms any generic AI API on your exact use case.

We own every phase of your ML project, from dataset audit and architecture design through model training, MLflow experiment tracking, Docker containerization, and deployment via Kubernetes or AWS SageMaker. No handoffs to separate data science and DevOps teams. One engineering team owns the problem end-to-end and is accountable for deployed model accuracy under real-world conditions, not just training benchmark results.

We optimize trained models for on-device deployment via TensorFlow Lite and Core ML, applying quantization and pruning to reduce model size and inference latency for iOS and Android targets. This capability eliminates server infrastructure costs for high-frequency inference tasks and enables ML features that run fully offline. Very few ML development teams have both the model training depth and the mobile deployment expertise to deliver this end-to-end.

Our computer vision pipelines use CNN architectures, including YOLO and Faster R-CNN, trained on your labeled image data for classification, object detection, and anomaly detection tasks specific to your industry. Our NLP systems use Hugging Face transformer models and BERT fine-tuned on your text corpus, not generic sentiment APIs. Domain-specific training produces accuracy levels no off-the-shelf API can match on your data.

Every training run is tracked with MLflow, logging hyperparameters, evaluation metrics, and model artifacts for full experiment reproducibility. Every model version is stored with its training data snapshot and configuration, meaning you can audit, compare, and roll back to any previous model state. No black-box results, no lost experiments, no deployments that cannot be traced back to a specific training run and dataset version.

Model accuracy degrades over time as real-world data distributions shift away from training data, a problem most ML vendors ignore after deployment. We implement ongoing monitoring pipelines that detect data drift and accuracy degradation, trigger retraining on new data, and validate performance improvements before promoting updated models to the live environment. Your ML system keeps improving after launch rather than quietly degrading as your data evolves.

DentaSmart is a mobile app that uses AI and 3D tech to simplify dental care, from early diagnosis to personalized treatment.

DentaSmart is a mobile app that uses AI and 3D tech to simplify dental care, from early diagnosis to personalized treatment.

From CTOs deploying greenfield predictive analytics models to founders building computer vision pipelines and on-device TensorFlow Lite inference systems here is what clients say about the ML engineering quality, model accuracy, and delivery process after working with ETechViral.

Amir Khan and his team is very responsible and works well. We have worked together and have been able to produce a good quality application. It has been easy to manage the project and they has delivered well. I would recommend others to use his services as they provide 100% perfect services.

Amir Khan and his team is very responsible and works well. We have worked together and have been able to produce a good quality application. It has been easy to manage the project and they has delivered well. I would recommend others to use his services as they provide 100% perfect services.

Amir Khan and his team is very responsible and works well. We have worked together and have been able to produce a good quality application. It has been easy to manage the project and they has delivered well. I would recommend others to use his services as they provide 100% perfect services.

No vague proposals. No generic AI tool recommendations. Just a free 30-minute consultation with our ML engineers, and a clear project scope with model architecture recommendations and dataset requirements delivered within 48 hours.

10+ Machine Learning engineers available now · TensorFlow · PyTorch · scikit-learn · TensorFlow Lite · Core ML · MLflow · 5+ years delivery experience